Higher Memory Capacity

The H200 features 141 GB of HBM3e memory, nearly double the capacity of the H100.

Increased Memory Bandwidth

With 4.8 TB/s of memory bandwidth, the H200 offers 1.4X more bandwidth than the H100, enabling faster data processing.

Enhanced AI Performance

The H200 is optimized for generative AI and large language models (LLMs), allowing for faster and more efficient AI model training and inference.

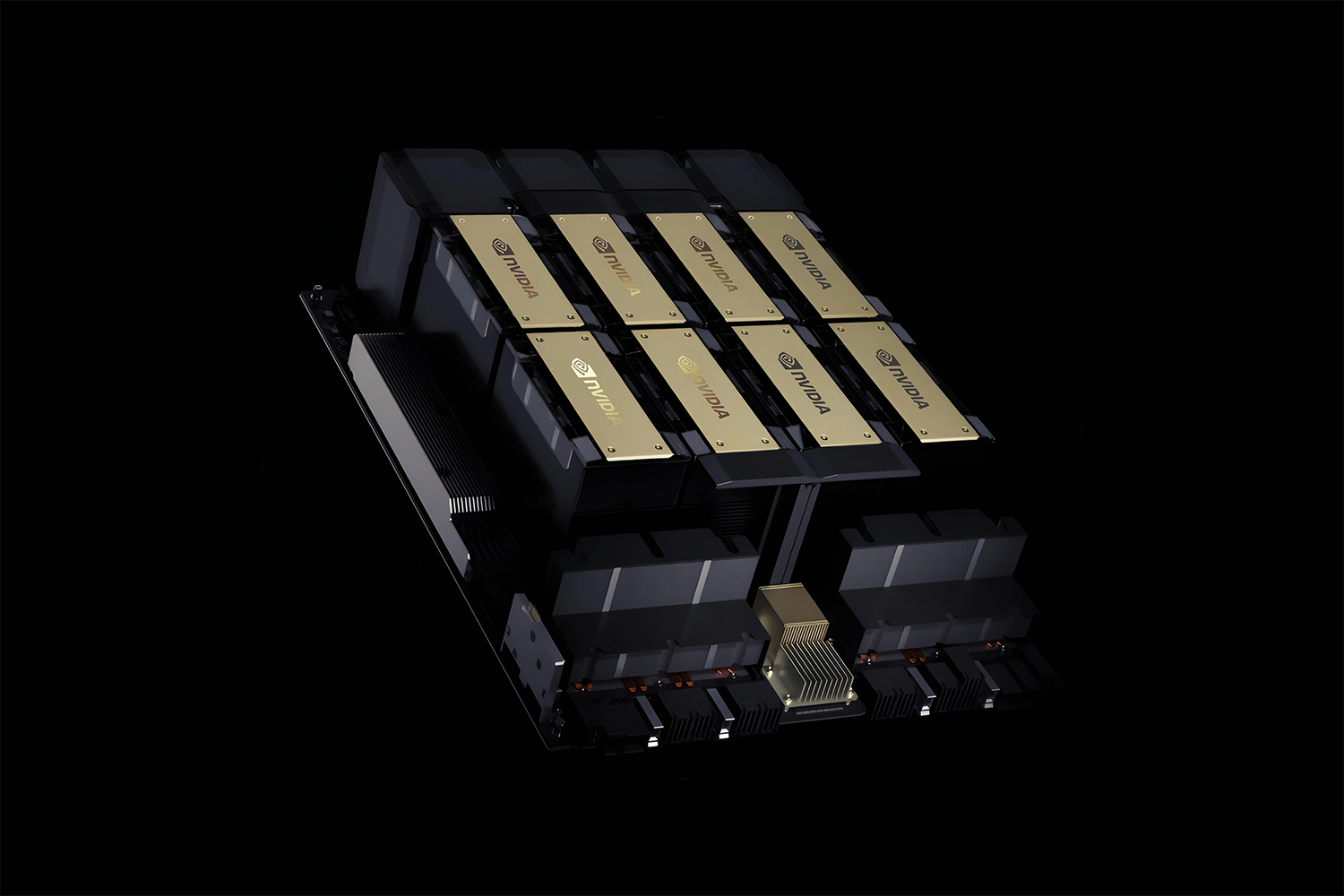

NVIDIA H200 Tensor Core GPU

The NVIDIA H200 Tensor Core GPU is designed to revolutionize generative AI and high-performance computing (HPC) tasks with unprecedented performance and advanced memory capabilities. As the first GPU equipped with HBM3e technology, the H200 delivers larger and faster memory, enabling accelerated development of large language models (LLMs) and breakthroughs in scientific computing for HPC workloads.

Experience cutting-edge advancements in AI and HPC with the NVIDIA H200 GPU, ideal for demanding AI models and intensive computing applications.